In the landscape of agile development, the user story stands as the fundamental unit of value delivery. Yet, too often, teams find themselves stalled by narratives that are vague, incomplete, or prone to interpretation. When a story lacks clarity, the cost is not merely measured in time; it is measured in rework, technical debt, and friction between product owners and development teams. This guide addresses the critical need to troubleshoot weak user stories, focusing on eliminating ambiguity and establishing robust acceptance criteria.

Weak stories act as a bottleneck. They force developers to make assumptions, which inevitably leads to implementation errors. When assumptions diverge from stakeholder intent, the cycle of correction begins. Fixing this requires a systematic approach to story creation, refinement, and validation. We must move beyond the surface level of “I want a feature” and delve into the structural integrity of the work item itself.

🚩 Identifying the Symptoms of a Weak Story

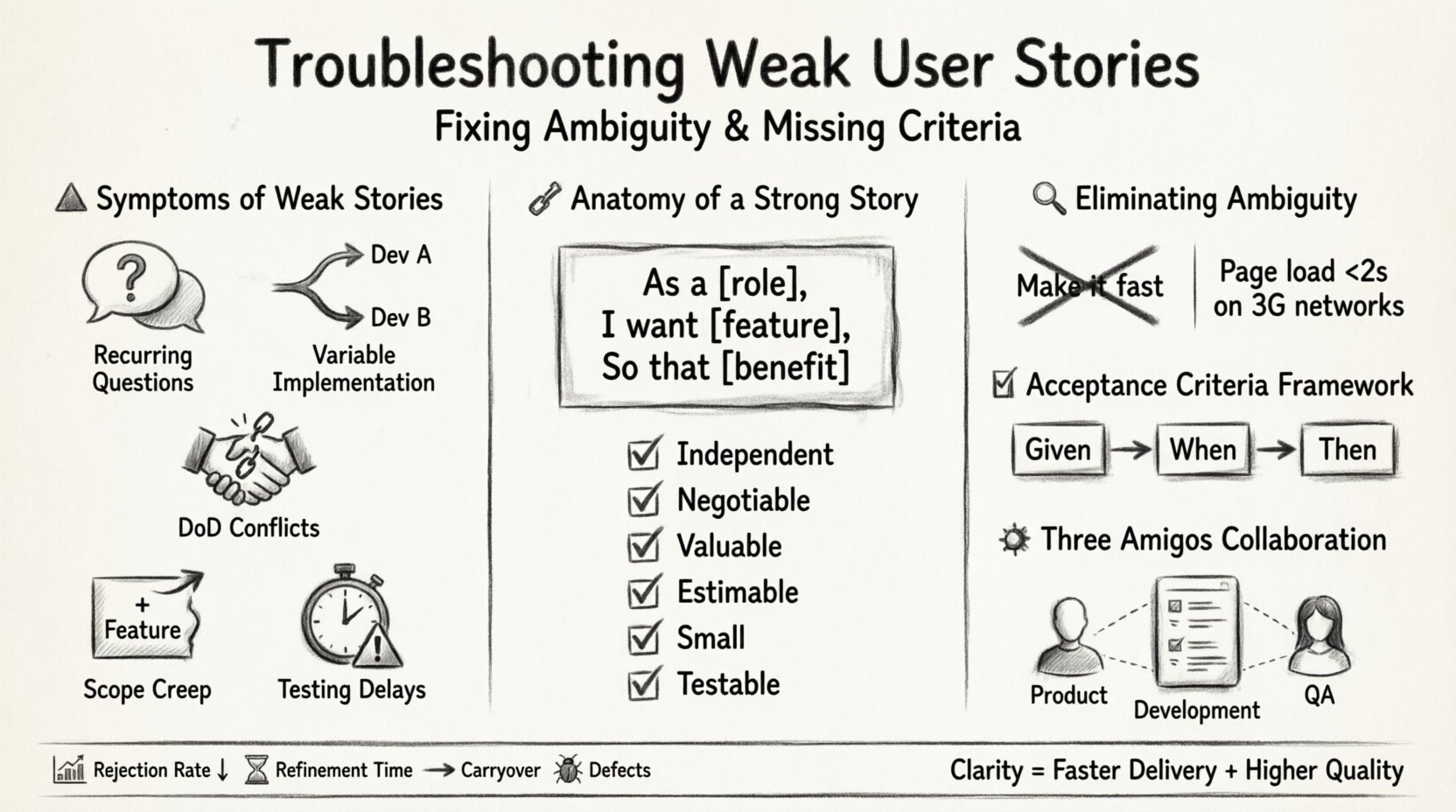

Before fixing the problem, one must recognize it. Weak user stories rarely announce themselves with a warning label. Instead, they reveal themselves through the behavior of the team and the quality of the output. Here are the primary indicators that a story requires immediate attention:

- Recurring Questions: If developers ask the same clarification questions during the sprint, the story was not written clearly enough.

- Variable Implementation: Two developers build the same feature differently because the requirements allowed for interpretation.

- Definition of Done Conflicts: The team agrees the work is complete, but stakeholders disagree on the value delivered.

- Scope Creep: The story grows during development because edge cases were not defined beforehand.

- Testing Delays: QA cannot write test cases because the expected behavior is not documented.

These symptoms suggest that the story is not a reliable contract between the business and the engineering team. Addressing these symptoms requires a shift in how we draft and review these artifacts.

📐 The Anatomy of a Strong User Story

A strong user story is more than a sentence. It is a structured communication tool. While frameworks exist, the core principle remains constant: clarity and testability. A well-constructed story adheres to specific structural requirements that ensure everyone involved shares the same understanding.

1. The Core Template

The standard format provides a baseline for communication. It focuses on the user, the need, and the benefit.

- As a [role], I want [feature],

- So that [benefit/value].

While this template is simple, it forces the writer to consider the “who” and the “why.” Missing either component often leads to weak stories. For example, stating “I want a login button” without specifying the user role or the benefit leaves the implementation open to guesswork. Is it for admin users? Is it for public access? Does it need biometric authentication or a password?

2. INVEST Criteria

To ensure a story is viable for development, it should be evaluated against the INVEST model. This mnemonic serves as a checklist for story health.

- Independent: The story should not rely on the completion of another story to be valuable or testable.

- Negotiable: Details should be flexible enough to allow discussion, not rigid specifications.

- Valuable: The story must deliver value to the end user or business.

- Estimable: The team must have enough information to provide a size estimate.

- Small: The story must be small enough to be completed within a single sprint.

- Testable: There must be a clear way to verify the story is complete.

When a story fails the “Testable” or “Estimable” criteria, it is inherently weak. Ambiguity destroys estimability. If the team cannot determine the effort, they cannot plan the sprint.

🔍 Diagnosing Ambiguity in Practice

Ambiguity is the enemy of execution. It often hides in vague verbs and undefined states. To troubleshoot ambiguity, we must scrutinize the language used in the story description and the associated requirements.

Common Ambiguous Phrases

Certain words trigger multiple mental models. When writing stories, avoid these terms unless they are explicitly defined in a glossary or context.

- “Fast”: Does this mean 200ms response time or 2 seconds? Is it for mobile or desktop?

- “Secure”: Does this mean data encryption, role-based access, or secure storage?

- “User-friendly”: This is subjective. It needs to be translated into specific usability metrics or design constraints.

- “Ensure”: Ensure what? What happens if the condition is not met?

- “Various”: How many? Which types?

The Cost of Assumptions

When ambiguity exists, developers fill the gap with assumptions. Sometimes these assumptions are correct, but often they are not. The cost of correcting a wrong assumption after code has been written is significantly higher than clarifying it during the refinement phase.

Consider a scenario where a story says “Allow users to upload files.” The developer assumes PDF, JPG, and PNG. The product owner intended only PDFs. The developer builds support for JPGs and PNGs. This is extra work. Alternatively, the developer assumes a 5MB limit, but the business requires 500MB. The system fails under load. These discrepancies arise from missing criteria.

📝 Crafting Acceptance Criteria

Acceptance criteria are the conditions that must be met for the story to be considered done. They are the contract of quality. Without them, testing is subjective.

Best Practices for Writing Criteria

- Be Specific: Avoid subjective language. Use numbers, ranges, and specific states.

- Focus on Behavior: Describe what the system does, not how it does it.

- Include Edge Cases: Define what happens when things go wrong (e.g., network failure, invalid input).

- Use Scenarios: Scenario-based writing helps visualize the user flow.

The Given-When-Then Format

This structure, often associated with behavior-driven development, provides a logical flow for criteria. It separates context, action, and outcome.

- Given: The initial context or state of the system.

- When: The action performed by the user or system.

- Then: The expected outcome or result.

Example:

- Given the user is logged in with an active subscription,

- When they attempt to download a premium report,

- Then the download starts immediately without a payment prompt.

This format forces the writer to think about preconditions and consequences. It reduces the likelihood of missing a scenario.

🛠️ Acceptance Criteria vs. Definition of Done

It is common to confuse Acceptance Criteria with the Definition of Done (DoD). While related, they serve different purposes.

- Acceptance Criteria: Specific to the individual story. It defines what makes that feature work correctly.

- Definition of Done: Global to the team or project. It defines the quality standards applied to all stories (e.g., code reviewed, tests passed, documentation updated).

A weak story often tries to include DoD items in the Acceptance Criteria, or vice versa. Keeping them separate ensures clarity. The DoD is the baseline; the Acceptance Criteria are the specific targets.

🧩 Refinement Strategies

Writing a strong story is a collaborative effort. It rarely happens in isolation. Refinement sessions are the primary mechanism for fixing ambiguity before work begins.

The Three Amigos

This technique involves collaboration between three perspectives: Product (Business), Development (Engineering), and Quality Assurance (Testing). Each brings a unique lens to the story.

- Product: Focuses on the user need and value.

- Development: Focuses on technical feasibility and implementation details.

- QA: Focuses on edge cases and how to verify the behavior.

When these three discuss a story, gaps in logic are exposed early. The developer might say, “That feature requires an API that doesn’t exist yet.” The QA might say, “How do we test this if the data isn’t there?” This conversation prevents the story from moving forward until it is robust.

Visual Aids

Text alone is often insufficient. Diagrams, wireframes, and flowcharts can clarify complex logic. A simple sequence diagram can show how data moves between services. A mockup can define layout constraints. Visuals reduce the cognitive load on the reader and minimize misinterpretation.

📊 Common Scenarios and Fixes

To illustrate the troubleshooting process, consider the following table of common weak story patterns and their corresponding fixes.

| Weak Pattern | Why It Fails | Recommended Fix |

|---|---|---|

| “Improve performance.” | Subjective and unmeasurable. | Specify metric: “Reduce page load time to under 2 seconds on 3G networks.” |

| “Handle errors gracefully.” | “Gracefully” is undefined. | Define behavior: “Show a user-friendly error message and log the stack trace.” |

| “Integrate with the database.” | Missing details on schema and constraints. | Specify data model: “Create a table for user preferences with fields X, Y, Z.” |

| “Make it accessible.” | Lacks specific standards. | Cite standard: “Meet WCAG 2.1 Level AA compliance for color contrast and screen readers.” |

| “Send a notification.” | Missing trigger and channel. | Detail trigger: “Send an email when order status changes to ‘Shipped’.” |

Reviewing your backlog using this table structure can quickly highlight areas that need attention. If a story looks like the “Weak Pattern” column, it requires refinement before it enters a sprint.

📈 Measuring Story Health

How do you know if the troubleshooting is working? You need metrics. Tracking the health of user stories provides feedback on the quality of the requirements process.

- Rejection Rate: How many stories are rejected by QA or stakeholders after implementation? A high rate indicates poor initial criteria.

- Refinement Time: How long does it take to clarify a story? If refinement sessions drag on, the story may be too complex or poorly defined initially.

- Carryover Rate: How many stories are not completed within the sprint? Ambiguity often leads to scope creep, causing stories to spill over.

- Defect Density: Are there bugs related to requirements rather than code? High requirement defects suggest weak criteria.

Tracking these metrics allows the team to adjust their process. If the rejection rate is high, the team might need to spend more time on the “Three Amigos” discussion or invest in better template training.

🔄 The Feedback Loop

Improving user stories is not a one-time task. It requires a continuous feedback loop. After a sprint, the team should review the stories that caused friction. Did the developers struggle with the criteria? Did the QA team find gaps?

Use retrospective data to update the story templates. If a specific type of ambiguity keeps appearing (e.g., missing error states), add a mandatory section for error handling in the story template. This evolution ensures that the team learns from its mistakes and continuously improves the quality of its output.

🧱 Building a Culture of Clarity

Finally, fixing weak stories is a cultural shift. It requires leadership support and a shared commitment to quality. When stakeholders understand that clear stories lead to faster delivery and higher quality, they are more likely to invest time in the refinement process.

Encourage a mindset where asking questions is praised, not penalized. If a developer is unsure about a story, they should feel empowered to pause and seek clarification rather than guessing. This prevents the accumulation of technical debt and rework.

Training is also essential. Not every team member knows how to write a testable acceptance criterion. Provide resources, workshops, or examples to elevate the writing skills of the entire team. When everyone speaks the same language of requirements, friction decreases.

🚀 Conclusion

Troubleshooting weak user stories is about more than just editing text. It is about establishing a rigorous standard for communication. By identifying symptoms, refining criteria, utilizing collaboration techniques, and measuring outcomes, teams can eliminate ambiguity and missing criteria.

The result is a smoother development process, fewer defects, and higher stakeholder satisfaction. A strong user story is the foundation of a successful project. Invest the time to build it correctly, and the execution will follow naturally. Clarity is the most valuable asset a team can possess.