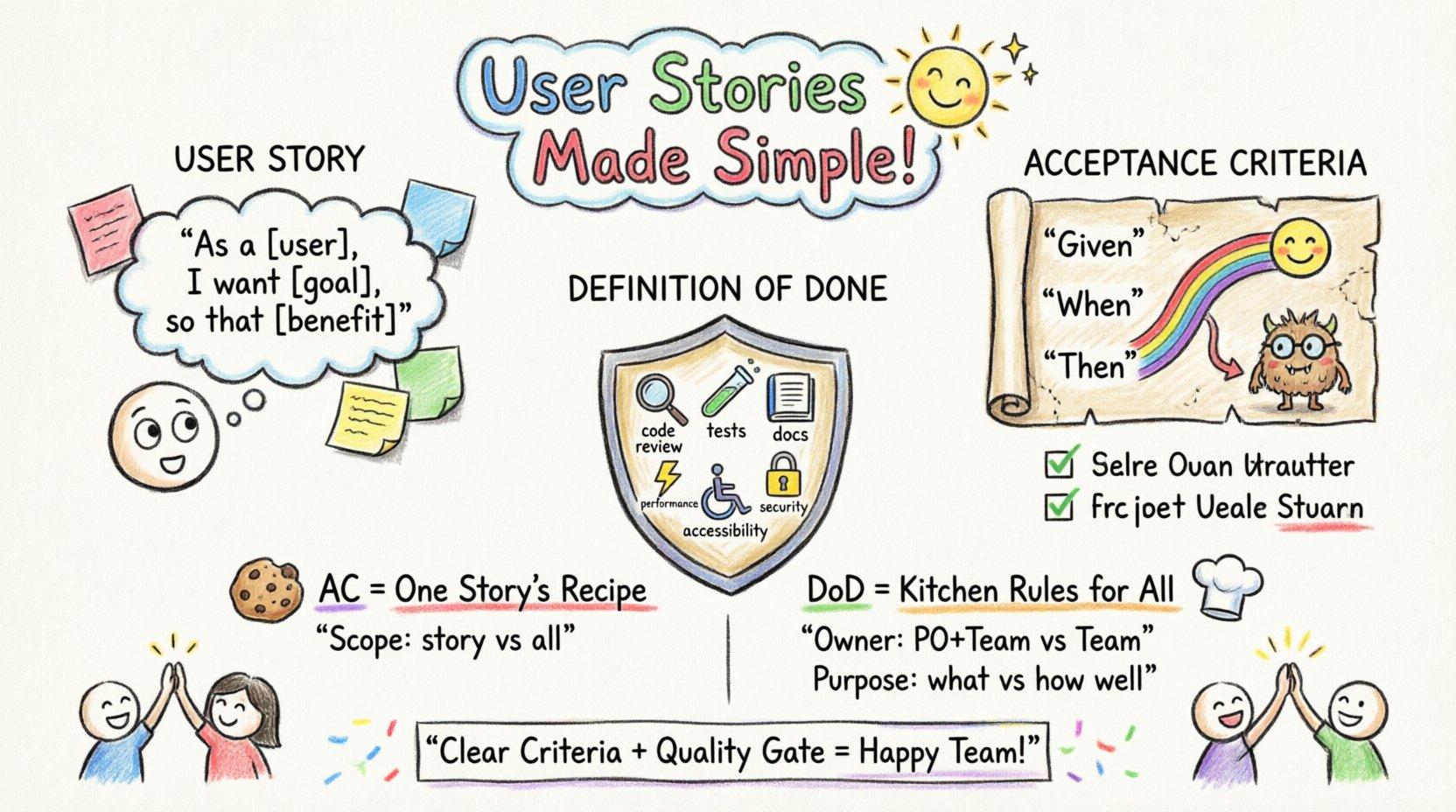

In the landscape of agile development, clarity is the currency of success. When a team begins work on a new feature, the foundation lies in the User Story. However, a User Story is merely a placeholder for a conversation. To transform that conversation into a working product, two critical artifacts are required: Acceptance Criteria and Definition of Done. These concepts serve as the guardrails that ensure quality, alignment, and predictability.

Many teams struggle with the distinction between these two concepts. Sometimes they are treated as the same thing, leading to confusion during testing or deployment. At other times, they are overlooked entirely, resulting in scope creep or incomplete features slipping into production. This guide explores the mechanics, purpose, and implementation of Acceptance Criteria and Definition of Done to help your team deliver value consistently.

What is a User Story? 📝

Before dissecting the components of a story, it is essential to recall what a User Story actually is. In agile frameworks, a User Story is a short, simple description of a feature told from the perspective of the person who desires the new capability. It typically follows the format:

- As a [type of user],

- I want [some goal],

- So that [some benefit].

This format focuses on the value provided to the user, rather than the technical implementation. However, this format alone is not enough to guide development. It does not specify the boundaries of the work or the standards required for completion. This is where Acceptance Criteria and Definition of Done step in.

Acceptance Criteria (AC): The Conditions for Completion ✅

Acceptance Criteria are a set of conditions that a User Story must satisfy to be considered complete from the product owner’s perspective. They define the boundaries of the story and provide a clear understanding of what the final product should look like.

The Purpose of Acceptance Criteria

Acceptance Criteria serve multiple functions within the development lifecycle:

- Clarification: They remove ambiguity. If a developer asks, “Should the button turn green or blue on hover?”, the AC should answer this.

- Testing: They act as the test cases. QA engineers use these conditions to validate the feature.

- Agreement: They ensure the product owner and the development team agree on what constitutes “finished” for this specific story.

Characteristics of Good Acceptance Criteria

Effective Acceptance Criteria are specific, measurable, and unambiguous. Avoid vague terms like “user-friendly” or “fast” without defining metrics. Instead, specify exact behaviors.

- Atomic: Each criterion should address a single requirement.

- Testable: It must be possible to verify the criterion with a pass or fail result.

- Independent: Criteria should not rely on external stories to be validated.

- Relevant: Focus on the user value, not the internal code structure.

Examples of Acceptance Criteria

Consider a story about adding a “Forgot Password” feature. Here is how AC might look:

- Given the user is on the login page,

When they click the “Forgot Password” link,

Then they are redirected to the password recovery page. - Given the user enters a valid email,

When they submit the form,

Then a confirmation message is displayed. - Given the user enters an invalid email,

When they submit the form,

Then an error message indicates the email format is incorrect.

These examples follow the Given/When/Then structure (often associated with Gherkin syntax), which promotes clarity and aligns with automated testing frameworks.

Definition of Done (DoD): The Quality Gate 🚧

While Acceptance Criteria are specific to a single User Story, the Definition of Done is a global standard applied to all work within a sprint or release. It represents the checklist of requirements that must be met for any increment of work to be considered potentially shippable.

The Purpose of Definition of Done

The DoD acts as a quality gate. It ensures that technical debt does not accumulate and that the product remains in a releasable state at all times. If a story meets its Acceptance Criteria but does not meet the Definition of Done, it cannot be marked as complete.

Common elements found in a Definition of Done include:

- Code Review: All code must be reviewed by at least one peer.

- Unit Tests: Automated tests must pass with 100% coverage for the new logic.

- Documentation: API documentation or user guides are updated.

- Performance: The feature meets minimum load time requirements.

- Accessibility: The feature adheres to WCAG guidelines.

- Security: No known vulnerabilities are introduced.

Why DoD Matters

Without a Definition of Done, teams often fall into the trap of “technically done but not actually ready.” A feature might function as intended according to Acceptance Criteria, but it might have broken another part of the system, lack proper documentation, or introduce security risks. The DoD prevents this by enforcing a baseline of quality across the entire backlog.

Acceptance Criteria vs. Definition of Done: The Key Differences 🆚

Confusion often arises because both concepts deal with “completeness.” However, their scope and ownership differ significantly. Understanding the distinction prevents misalignment between developers, testers, and product owners.

| Feature | Acceptance Criteria | Definition of Done |

|---|---|---|

| Scope | Specific to a single User Story | Global for the entire team or project |

| Ownership | Product Owner and Development Team | Entire Development Team |

| Flexibility | Changes per story based on requirements | Stable, though can be updated over time |

| Purpose | Defines functional requirements | Defines quality and non-functional standards |

| Verification | Functional testing against user needs | Technical and process verification |

Think of Acceptance Criteria as the destination for a specific trip, while the Definition of Done is the safety checklist required for every vehicle on the road.

How to Write Effective Acceptance Criteria 📝

Writing Acceptance Criteria is a collaborative effort. It should not be done in isolation by the product owner. The best practice involves the “Three Amigos” concept, where the Product Owner, a Developer, and a Tester collaborate early in the refinement process.

Step 1: Identify the User Goal

Start by restating the value proposition. What problem is the user solving? This ensures the criteria stay focused on the user experience rather than technical details.

Step 2: Define Positive and Negative Scenarios

Don’t just write the happy path. Consider what happens when things go wrong.

- Happy Path: The user performs the action exactly as expected.

- Edge Cases: What happens with special characters, missing data, or slow connections?

- Negative Path: How does the system handle invalid inputs gracefully?

Step 3: Use Clear Language

Avoid jargon where possible. If technical terms are necessary, ensure they are defined. Use active voice. For example, “The system shall validate…” is less clear than “The user receives an error message…”.

Step 4: Prioritize

If a story is large, break it down. Acceptance Criteria should be achievable within the sprint. If the criteria imply work that cannot be finished in the sprint, the story needs to be split.

How to Establish a Robust Definition of Done 🛠️

The Definition of Done is not a static document. It evolves as the team matures and as technology changes. It should be visible to everyone, often displayed on a physical or digital board.

Step 1: Consult the Team

The DoD is owned by the team that does the work. Developers, testers, and designers should contribute to the list. If a developer adds a requirement, they are more likely to adhere to it.

Step 2: Categorize Requirements

Group DoD items into logical categories to make the checklist manageable.

- Code Quality: Linting passed, no warnings, peer review completed.

- Testing: Unit tests written, integration tests passed, manual testing verified.

- Deployment: Deployed to staging, smoke tests passed.

- Documentation: Readme updated, API docs synced.

Step 3: Make it a Hard Stop

If a story does not meet the DoD, it cannot be closed. This requires discipline. It is tempting to say, “We will fix the documentation later,” but that creates technical debt. The story remains in “In Progress” until the DoD is met.

Step 4: Review and Refine

During retrospectives, ask the team: “Did our DoD catch any issues?” or “Is there a requirement we are consistently missing?” Update the DoD based on these insights.

Common Mistakes to Avoid ⚠️

Even experienced teams stumble when implementing these practices. Being aware of common pitfalls can save significant time and frustration.

1. Vague Acceptance Criteria

Writing criteria like “The system should be secure” is useless. It is not testable. Instead, specify “The system must require multi-factor authentication for admin accounts.”

2. DoD as a Box-Ticking Exercise

If the team checks the DoD box without actually performing the work (e.g., skipping the code review), the DoD loses its meaning. The DoD must be respected, not just read.

3. Overcomplicating the DoD

A DoD with 50 items becomes overwhelming. Focus on the essential quality gates. If a requirement is rarely violated, it might be a guideline rather than a hard DoD item.

4. Ignoring Non-Functional Requirements

Teams often focus heavily on AC (functional) and ignore DoD (non-functional). Performance, security, and accessibility are part of the DoD, not the AC. Neglecting them leads to features that work but are slow or unsafe.

5. Creating DoD Without Team Buy-In

If the Product Owner sets the DoD without developer input, the team may resist it. The DoD must be a shared agreement.

Integrating Into the Workflow 🔄

Acceptance Criteria and Definition of Done should be integrated into every stage of the development lifecycle, not just at the end.

Refinement Phase

During backlog refinement, the Product Owner drafts the Acceptance Criteria. The team reviews them to ensure they are testable and feasible. Questions are asked and answered here, not during the sprint.

Sprint Planning

When committing to stories, the team verifies that the Acceptance Criteria are clear. If they are not, the story is not ready for the sprint.

Development Phase

Developers write code to meet the AC. Simultaneously, they ensure they adhere to the DoD (e.g., writing tests, requesting reviews).

Testing Phase

QA engineers verify the AC against the built feature. They also verify the DoD (e.g., checking code coverage reports, accessibility scans).

Review and Closure

Before a story is moved to “Done,” the team confirms both AC and DoD are satisfied. If not, it returns to the queue.

Measuring Success 📊

How do you know if your Acceptance Criteria and Definition of Done are working? Track metrics over time.

- Defect Rate: Are bugs found in production decreasing? A strong DoD should catch issues before release.

- Rejection Rate: Are stories being rejected by the Product Owner frequently? This indicates poor AC definition.

- Velocity Stability: Does the team’s velocity remain consistent? If stories are frequently returned for missing DoD items, velocity will fluctuate.

- Deployment Frequency: Does a clear DoD allow for smoother, more frequent deployments?

Frequently Asked Questions ❓

Here are common questions teams ask when implementing these standards.

Q: Can Acceptance Criteria change during a sprint?

A: Ideally, no. If the AC changes significantly, it may indicate the story was not well understood during refinement. Minor clarifications are acceptable, but major scope changes should result in a new story or an adjustment to the sprint scope.

Q: Does every story need a unique Definition of Done?

A: No. The DoD is global. However, specific technical stories might have additional requirements added to the checklist for that specific item, but the base DoD applies to all.

Q: What if the team disagrees on the DoD?

A: Facilitate a discussion. The goal is consensus. If a developer insists on a requirement that the tester disagrees with, discuss the risk. If the risk is low, drop it. If high, keep it.

Q: How detailed should Acceptance Criteria be?

A: Detailed enough to be testable. If a QA engineer can write a test case directly from the AC, it is sufficiently detailed. If they need to ask questions, it needs more detail.

Q: Can automated testing replace manual testing in the DoD?

A: It depends. For critical logic, yes. For user experience or visual elements, manual validation might still be required. The DoD should reflect the best practice for quality assurance.

Final Thoughts on Quality and Alignment 🌟

Implementing Acceptance Criteria and Definition of Done is not about bureaucracy; it is about respect. It is respect for the user who expects a working feature, respect for the developer who wants clear requirements, and respect for the product owner who needs confidence in delivery.

When these two concepts are used correctly, they create a shared language for the team. They reduce the need for constant clarification meetings. They prevent the accumulation of technical debt. And most importantly, they ensure that every story completed adds real value to the product.

Start small. Define a basic DoD. Write clear AC for your next story. Review them in your next retrospective. Over time, these practices will become second nature, embedded in the culture of your team. The result is a steady stream of high-quality software that meets the needs of the people who use it.