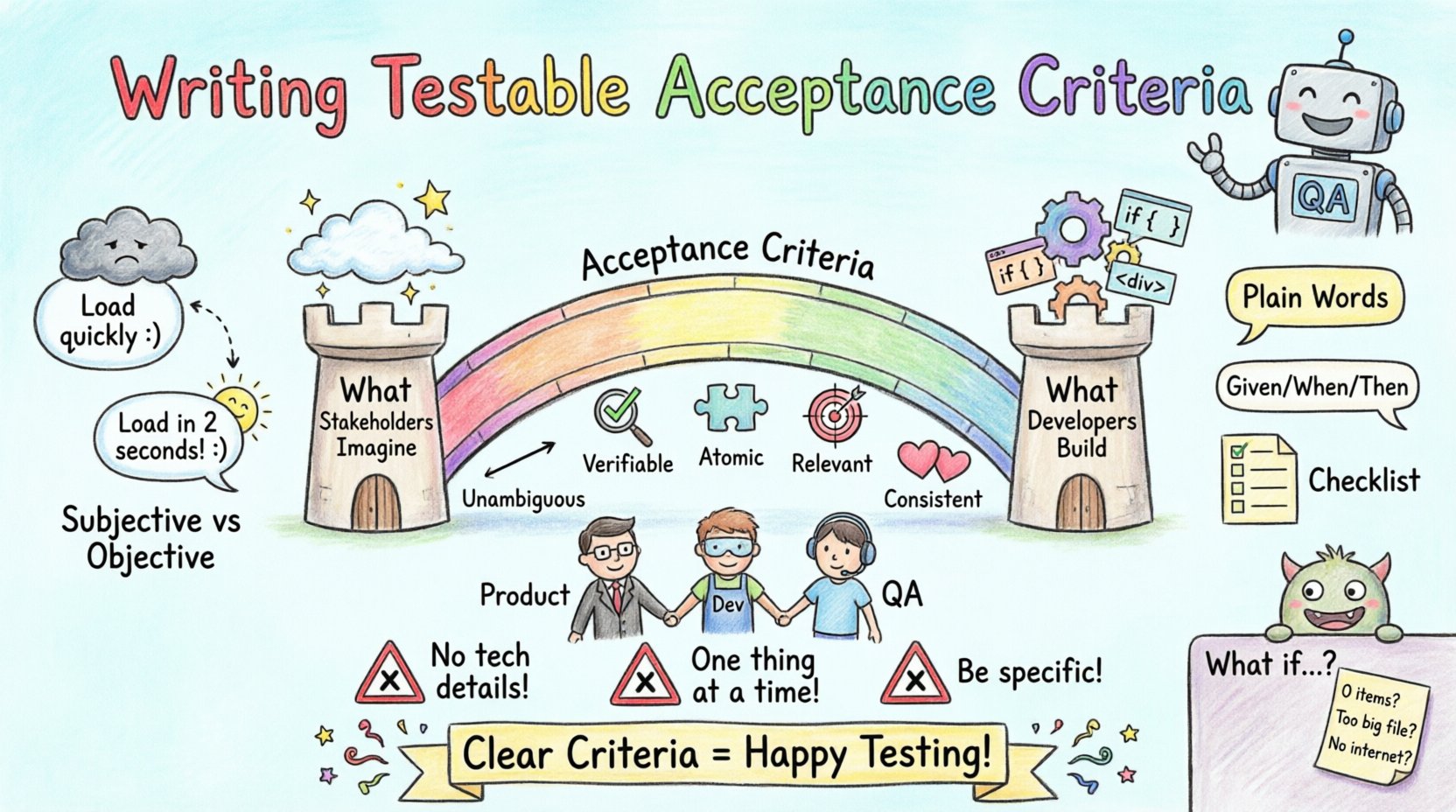

In the fast-paced environment of software development, the gap between what a stakeholder envisions and what a developer builds can be vast. This gap is often bridged by the User Story Acceptance Criteria. For Quality Assurance (QA) teams, these criteria are not just a checklist; they are the contract of quality. When written clearly, they transform ambiguity into actionable test scenarios.

Many teams struggle with vague requirements. Phrases like “user-friendly” or “fast loading” appear frequently in early drafts but fail under the scrutiny of rigorous testing. This guide provides a structured approach to crafting testable acceptance criteria that empower QA engineers, reduce defect leakage, and ensure alignment across Product, Development, and Testing functions.

🎯 Why Testable Acceptance Criteria Matter

Acceptance Criteria (AC) define the boundaries of a user story. They specify the conditions that must be met for the story to be considered complete. For QA professionals, these statements serve as the foundation for test case creation. Without them, testing becomes a guessing game.

- Clarity in Expectations: Clear criteria eliminate interpretation errors between roles.

- Efficient Testing: Specific conditions allow testers to write precise test cases immediately.

- Reduced Rework: Ambiguity often leads to building the wrong feature. Good AC prevents this waste.

- Automated Testing Support: Testable statements are prerequisites for automation scripts.

When AC is vague, the QA team must spend time clarifying requirements during the sprint, slowing down delivery. When AC is precise, the focus shifts entirely to validation and quality assurance.

🔍 Characteristics of a Testable Statement

Not every requirement is testable. A statement is only valid if it can be verified objectively. To ensure testability, criteria should adhere to the following principles:

- Unambiguous: There is only one interpretation of the statement.

- Verifiable: It is possible to confirm pass or fail through observation or data.

- Atomic: Each criterion addresses a single condition, not a compound requirement.

- Relevant: It directly relates to the user story goal.

- Consistent: It does not contradict other criteria or system constraints.

Consider the difference between subjective and objective language. Subjective terms rely on opinion, while objective terms rely on data.

📉 Subjective vs. Objective Examples

| Subjective (Avoid) | Objective (Target) |

|---|---|

| The page should load quickly. | The page should load within 2 seconds on a 3G connection. |

| The system should be secure. | Passwords must be encrypted using SHA-256 hashing. |

| Users should find it easy to navigate. | Users can reach the checkout page within 3 clicks from the homepage. |

| The report must look good. | The report must display a total of 5 columns with aligned headers. |

Notice how the objective versions provide specific metrics, methods, or counts. These allow a tester to execute a pass/fail decision without consulting a Product Owner.

🛠 Writing Techniques for Acceptance Criteria

Several formats exist for documenting acceptance criteria. The choice depends on the team’s maturity and the complexity of the feature. Below are the most effective methods.

1. Plain Language Statements

Simple, declarative sentences work well for straightforward features. This approach is accessible to non-technical stakeholders.

- When the user clicks the submit button, a success message appears.

- When the user enters an invalid email, an error message displays below the field.

- The system must not allow duplicate account creation with the same email address.

2. Gherkin Syntax (Given/When/Then)

This format aligns closely with Behavior Driven Development (BDD). It structures criteria into Context, Action, and Outcome. It is highly preferred for complex workflows.

- Given: The user is on the login page.

- When: The user enters a valid username and password.

- Then: The system redirects the user to the dashboard.

This structure forces the writer to consider preconditions and expected outcomes explicitly.

3. Checklist Format

Sometimes a list of conditions is sufficient, especially for UI updates or configuration changes. Each item represents a testable condition.

- Header displays company logo.

- Navigation bar remains fixed at the top during scroll.

- Footer contains copyright year and legal links.

- Contact form requires first name and last name.

🤝 Collaboration Strategies

Writing acceptance criteria is rarely a solitary task. It requires input from the Product Owner, Developers, and QA Engineers. The “Three Amigos” session is a common practice where these three roles meet to define criteria together.

Key Collaboration Goals

- Shared Understanding: Ensure everyone interprets the requirements identically.

- Feasibility Check: Developers confirm if the criteria are technically achievable.

- Testability Review: QA ensures the criteria can be verified without ambiguity.

- Edge Case Identification: The group discusses what happens when things go wrong.

By involving QA early in the writing phase, potential blockers are identified before coding begins. This reduces the risk of finding critical defects late in the cycle.

⚠️ Common Pitfalls and Anti-Patterns

Even experienced teams fall into traps when writing criteria. Recognizing these patterns helps in maintaining high quality.

1. Including Technical Implementation Details

Acceptance criteria should describe what the system does, not how it does it. Implementation details belong in technical design documents.

- Bad: The database must use a MySQL table named users.

- Good: The system must store user credentials securely and retrieve them for authentication.

2. Overloading Multiple Features

Each criterion should address one specific behavior. Combining multiple behaviors creates a complex condition that is hard to test.

- Bad: User can log in and see their profile picture.

- Good: User can log in. User profile displays the uploaded image.

3. Using Negative Phrasing Excessively

While negative testing is important, too many “must not” statements can obscure the primary flow.

- Bad: The system must not allow null values. The system must not allow empty strings. The system must not allow special characters.

- Good: The system validates input fields to ensure they contain only alphanumeric characters and are not empty.

4. Ignoring Non-Functional Requirements

Functional criteria are vital, but performance, security, and accessibility matter too. These should be included if they impact the user experience.

- Response time must not exceed 200ms for search queries.

- The interface must support keyboard navigation for all interactive elements.

- Data transmission must be encrypted via HTTPS.

🧩 Edge Cases and Boundary Conditions

Standard happy paths are easy to write. The true value of QA lies in exploring the boundaries. Acceptance criteria should explicitly mention how the system handles extreme or unusual inputs.

Categories of Edge Cases

- Zero Values: What happens if a quantity is 0?

- Maximum Limits: What is the max character count for a text field?

- Null States: How does the UI render when data is missing?

- Concurrent Actions: What happens if two users edit the same record simultaneously?

- Network Failures: How does the system behave when the internet disconnects?

Example of an edge case criterion:

- If a user attempts to upload a file larger than 50MB, the system displays an error message and rejects the file.

🔄 Maintenance and Evolution

Acceptance criteria are not static documents. As the product evolves, so must the criteria. Refactoring code often requires updating the criteria to match new behaviors.

Maintenance Best Practices

- Review During Sprint Planning: Revisit old stories to ensure criteria still match the current behavior.

- Update Post-Bug Fix: If a bug reveals a missing condition, add it to the AC immediately.

- Archive Obsolete Criteria: Remove criteria that no longer apply to avoid confusion.

- Version Control: Keep a history of changes to criteria for audit purposes.

Keeping criteria up to date ensures that regression testing remains effective. Outdated criteria lead to false positives where tests pass even though the feature has changed.

📊 Measuring the Quality of Acceptance Criteria

How do you know if your acceptance criteria are working? Use metrics to evaluate their effectiveness over time.

- Test Case Coverage: High coverage indicates clear criteria. Low coverage suggests ambiguity.

- Defect Leakage: If bugs escape to production that contradict AC, the criteria were likely insufficient.

- Clarification Time: Track how long QA spends asking questions about requirements. High time indicates poor AC.

- Automation Rate: High automation rates correlate with testable, unambiguous criteria.

Regular retrospectives can help teams discuss these metrics and adjust their writing process accordingly.

🔗 Integration with Definition of Done

Acceptance Criteria are specific to a user story. The Definition of Done (DoD) applies to the entire release or sprint. They work together but serve different purposes.

- DoD: “All code reviewed,” “All unit tests passed,” “Documentation updated.” (Global standards)

- AC: “User can reset password via email.” (Feature specific)

A story is only complete when both the AC are met and the DoD is satisfied. QA teams must verify both layers to sign off on a feature.

💡 Practical Examples

To solidify understanding, let’s look at a complete example of a user story with poor and improved criteria.

Story: Password Reset Functionality

❌ Poor Acceptance Criteria

- User can reset password.

- System sends email.

- Link expires after some time.

- Security is important.

✅ Improved Acceptance Criteria

- Given the user is on the login page, when they click “Forgot Password”, they are redirected to the reset form.

- When the user enters a registered email address and submits, a confirmation message appears on screen.

- An email containing a unique reset link is sent within 5 minutes.

- The reset link expires 24 hours after generation.

- If the user enters an incorrect code, the system displays an error and allows retry.

- New passwords must meet complexity requirements (8 characters, 1 number, 1 symbol).

The improved version allows QA to write specific test cases for email timing, link expiration, and password complexity rules.

🚀 Moving Forward

Writing testable acceptance criteria is a skill that improves with practice. It requires discipline to avoid vague language and a commitment to clarity. By focusing on objective, verifiable statements, QA teams can reduce ambiguity and deliver higher quality software.

Start by auditing your current stories. Identify criteria that rely on opinion or vague metrics. Rewrite them to include specific conditions. Encourage collaboration between roles to ensure shared understanding. Over time, the reduction in defects and rework will validate the effort.

Remember, the goal is not just to write text. The goal is to define quality. When criteria are sharp, testing is efficient, and the product is reliable.